- You are here:

- Home »

- Uncategorized »

- Excel Is a Workhorse. But When Have You Pushed It Too Far?

Excel Is a Workhorse. But When Have You Pushed It Too Far?

Data & Analytics

Half a million rows, a progress bar creeping at 1% every two seconds, and a deadline breathing down your neck. Sound familiar? Here is what to do about it.

Let’s be honest. Excel has earned its reputation. It’s installed on virtually every work computer on the planet, it speaks the language of every stakeholder who has ever asked for “a quick spreadsheet,” and for a huge range of tasks, it remains genuinely the right tool. Pivot tables, quick financial models, ad-hoc calculations, and reporting summaries: Excel handles all of this with ease. Nobody is here to throw shade at Excel.

The site owner may receive a commission from links included on this page.

But there comes a point, and many analysts know exactly what it feels like, where Excel stops being a tool and starts being an obstacle. A dataset of 500,000 rows. An RFM analysis that’s been running since last Tuesday. A progress bar inching forward at 1% every two seconds. That’s not a workflow. That’s a hostage situation.

So how do you know when you’ve genuinely hit the ceiling? And what do you actually do about it?

Understanding Why Excel Struggles at Scale

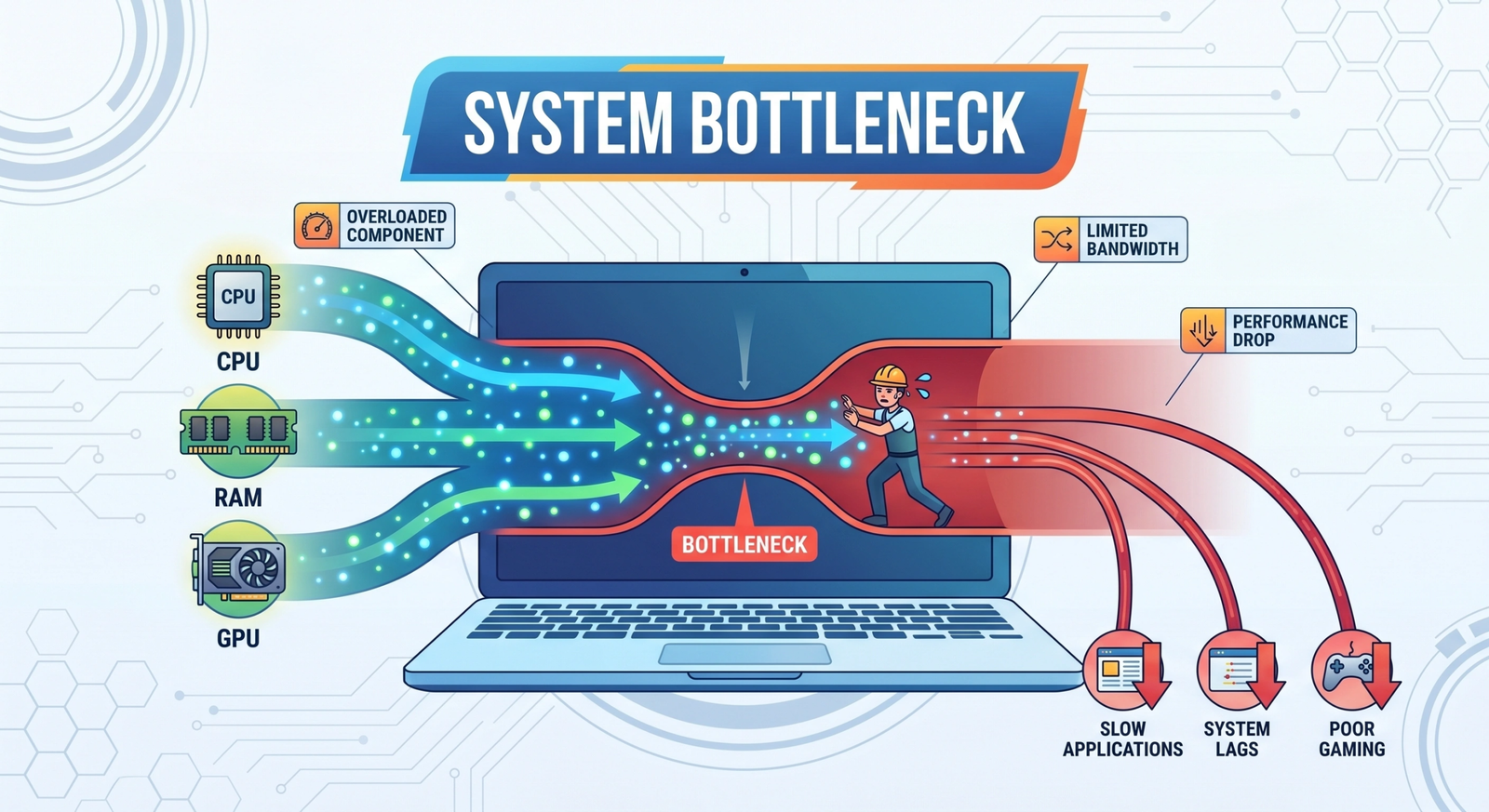

Excel’s core architecture was designed around a cell-based, row-oriented calculation model. Every formula you write recalculates across rows and columns. That’s fine for thousands of records. Once you’re at hundreds of thousands, you’re asking the engine to do something it was never really built for.

The row limit in a standard Excel worksheet is just over 1 million rows. But hitting that ceiling physically is almost beside the point. The real problems show up long before you get there: sluggish recalculations, memory pressure, file sizes that take forever to save, and complex formulas that grind to a halt. The 500K row RFM problem that opened this article is a textbook example. Excel isn’t broken. It’s just working exactly as designed, and the design has limits.

- Calculations take minutes (or longer) to complete

- Your file regularly exceeds 50–100MB

- You’re frequently hitting memory warnings

- You find yourself breaking data across multiple workbooks just to cope

- The same analysis is a recurring task, not a one-off

- Colleagues can’t open the file without their machine struggling

The First Stop: Power Query and Power Pivot

Before you jump ship entirely, it’s worth noting that Excel has a more powerful layer built in that most people underuse. Power Query handles data transformation and loading. Power Pivot handles data modeling and aggregation using a formula language called DAX. Together, they can handle workloads that would cripple a regular worksheet.

The key here is understanding why this works better. When you load data into the Power Pivot data model, it’s stored using the VertiPaq engine, a columnar, in-memory compression system originally built for Microsoft’s enterprise analytics products. VertiPaq is the same engine powering Analysis Services and Power BI. It stores data by column rather than by row, so aggregations only need to read the columns they care about rather than scan entire rows. It also aggressively compresses data, so more fits in memory and operations run faster.

“Loading data into the data model rather than working from worksheet data is not just a stylistic choice. The architecture makes a huge difference in scale.”

One more thing to understand about Power Query: it supports query folding, where transformations are pushed back to the data source rather than pulling everything into memory first. If you’re connecting to a database, this is a significant efficiency gain. The rule of thumb is to filter and aggregate aggressively in Power Query before any data reaches the model.

One caveat: VertiPaq is still an in-memory engine. If your dataset is enormous and your machine has limited RAM, you’ll still feel the squeeze. It’s a major improvement over standard worksheets, but it’s not a silver bullet. The machine you’re working on still matters.

Python: The Analysts’ Escape Hatch

For analysts who are open to writing a bit of code, Python is where things get genuinely fast. A 500K row RFM analysis that Excel labours over for hours runs in seconds in Python. The pandas library is the standard choice for data manipulation, and it handles aggregations, grouping, and scoring with very little code.

import pandas as pd

df = pd.read_csv("your_data.csv")

snapshot_date = df["order_date"].max() + pd.Timedelta(days=1)

rfm = df.groupby("customer_id").agg(

Recency=("order_date", lambda x: (snapshot_date - x.max()).days),

Frequency=("order_id", "count"),

Monetary=("revenue", "sum")

).reset_index()

rfm["R"] = pd.qcut(rfm["Recency"], 5, labels=[5,4,3,2,1])

rfm["F"] = pd.qcut(rfm["Frequency"].rank(method="first"), 5, labels=[1,2,3,4,5])

rfm["M"] = pd.qcut(rfm["Monetary"], 5, labels=[1,2,3,4,5])

rfm["RFM_Score"] = rfm["R"].astype(str) + rfm["F"].astype(str) + rfm["M"].astype(str)

rfm.to_excel("rfm_output.xlsx", index=False)If you need even more speed on large datasets, look at polars, a newer Python library built in Rust that is dramatically faster than pandas for certain operations. It’s particularly good when you’re working with very wide or very long datasets and need results quickly.

A word on Python in Excel, which Microsoft has been rolling out: it runs your Python code on Microsoft’s cloud servers rather than locally. That means your data gets uploaded before processing, and you’re subject to compute limits and potential timeouts on large datasets. For lightweight tasks and quick visualisations it has its uses, but for a heavy RFM workload at this scale, running Python locally as a standalone script will always be faster and more reliable.

The practical pipeline looks like this: raw data goes into Python, Python does all the heavy lifting, and a clean summarised file comes out the other end for distribution in Excel. Excel becomes the presentation layer, which is what it’s actually very good at.

SQL: Often the Most Natural Fit

If your data lives in or can be moved into a database, SQL might be the most elegant solution of all. RFM analysis maps almost perfectly to SQL’s native strengths. Grouping by customer, aggregating transaction counts and revenue, and calculating recency from date functions are exactly what SQL was designed to do, and it does it at a scale that dwarfs what Python or Excel can manage locally.

Most analysts already have access to some form of SQL database at work. PostgreSQL, MySQL, Microsoft SQL Server, and BigQuery all handle this kind of aggregation well. If you don’t have direct access, it’s worth talking to a DBA about getting a table loaded and the right permissions granted. One well-written query can replace hours of Excel frustration.

The bonus is that Excel can connect directly to a SQL database through Power Query, so if stakeholders still need their spreadsheet view, you can pull the summarised results straight into Excel without any manual file exporting.

What About DuckDB?

DuckDB deserves a special mention because it’s not yet as well known as it should be. It’s a local, serverless SQL engine that you can run directly on your laptop with no setup overhead. It can query CSV files, Parquet files, and other formats directly without importing data into a separate database system first. For a 500K row dataset, DuckDB is fast, genuinely fast. It’s a strong option for analysts who want SQL’s expressive power without needing to spin up infrastructure.

What If Your Local Machine Is the Bottleneck?

Here’s a scenario that comes up more than you’d expect. You’ve written the Python script. The logic is sound. But your laptop is ageing, RAM is tight, and running a half-million row analysis locally still feels sluggish. Or maybe you’re a small business that has genuinely outgrown Excel but isn’t ready to commit to an enterprise platform like Microsoft Fabric, which comes with a corresponding enterprise price tag.

This is where cloud compute becomes interesting, and more accessible than most people realise.

RunPod is a cloud compute platform that lets you spin up high-performance virtual environments on demand and pay only for the seconds you actually use. It’s primarily known in AI and machine learning circles for GPU workloads, but it also offers Serverless CPU pods specifically designed for data processing tasks, the kind where GPU parallelism isn’t needed, but raw CPU speed and memory headroom are. We’re talking up to 3.75 GHz cores, DDR5 memory, and NVMe SSD storage, available in Compute-Optimized or General Purpose configurations depending on what your workload needs.

The practical workflow looks like this. You upload your dataset, spin up a RunPod CPU pod with a Python environment pre-configured, run your aggregation or RFM analysis, export the results, and shut the pod down. You’re billed by the second. If your analysis takes four minutes, you pay for four minutes. There’s no monthly subscription, no idle cost between runs, and no infrastructure to manage beyond clicking deploy.

“For a small business that has hit Excel’s ceiling but can’t yet justify an enterprise data platform, paying for a few minutes of cloud compute per analysis run is a genuinely compelling middle ground.”

The other reason RunPod is worth flagging here is the growth trajectory it supports. Today, the problem is a slow RFM analysis. In six months, as the business grows, the problem might be building a customer churn model or running demand forecasting. RunPod‘s GPU pods handle those workloads too, on the same platform and account. You’re not switching tools as the complexity increases; you’re just selecting different hardware for the job.

One caveat worth being upfront about: RunPod is not a point-and-click analytics tool. You’ll need a basic comfort level with Python and, ideally, a familiarity with Docker containers or Jupyter notebooks to get the most out of it. It’s infrastructure, not a finished product. But for an analyst who has already made the leap to writing Python scripts, the additional step to running those scripts on a cloud pod is a small one.

Other Alternatives Worth Knowing About

The Microsoft stack isn’t your only option, and depending on your organisation and skillset, some of these alternatives might be a better fit.

| Tool | Best For | Skill Required |

|---|---|---|

| Google Sheets + BigQuery | Cloud-native teams already in Google Workspace; pull summarised results from massive datasets into a familiar sheet | Low to moderate |

| Tableau / Looker | Heavy visualisation and dashboarding needs; connects directly to databases and data warehouses | Moderate |

| Databricks / Snowflake | Enterprise-scale data warehousing; for organisations managing very large volumes across multiple teams | High (typically requires data engineering support) |

| R (with dplyr / data.table) | Statistical analysis at scale; data.table in particular is exceptionally fast for large aggregations | Moderate |

| Apache Spark (via PySpark) | Distributed computing for truly massive datasets that don’t fit on a single machine | High |

When It’s Time to Make a Real Move: Power BI and Fabric

If large dataset analysis is a regular part of your job, not a one-off, the time you spend fighting Excel’s limitations is time you could spend on actual analysis. That’s the signal to consider a proper escalation.

For analysts already in the Microsoft ecosystem, Power BI is the most natural next step. It’s built on top of the same VertiPaq engine you’d be using in Power Pivot, so the concepts transfer directly. It handles tens of millions of rows comfortably in Import mode, and its DirectQuery mode lets you connect live to databases without importing data at all. DAX knowledge from Power Pivot carries over almost entirely. The learning curve from Power Pivot to Power BI is genuinely shallow compared to picking up an entirely new tool from scratch.

Microsoft Fabric takes things further, combining data storage, pipelines, notebooks, and Power BI into a single unified platform. It’s aimed at organisations that need a full end-to-end data infrastructure, competing in the same space as Databricks and Snowflake. Most individual analysts won’t be operating Fabric directly, but it’s worth understanding as your organisation’s data maturity grows.

And here’s the thing that often gets overlooked in these conversations: moving to a more robust tool doesn’t mean abandoning Excel. Stakeholders who want spreadsheets can still have their spreadsheets. The difference is that Excel becomes the final reporting layer, fed by a Power BI semantic model, a Python script, or a SQL query, rather than the engine trying to do everything at once. That’s a much healthier architecture for everyone involved.

The Bottom Line

Excel is genuinely excellent at what it was designed for. But using it for large-scale data processing because it’s familiar is the analytical equivalent of using a kitchen knife to cut down a tree. It might technically work, but there are better tools for the job and your time is worth more than a progress bar at 1%.

The path forward depends on where you are. If you need to stay in Excel, Power Query and Power Pivot will take you further than you think. If you’re ready for a bit of code, Python or SQL will change the way you work. If your local machine is the bottleneck and a full enterprise platform feels like overkill right now, RunPod‘s Serverless CPU is a pay-as-you-go bridge that scales with you. And if large datasets are your daily reality across the whole organisation, Power BI or one of the other platforms in the table above is worth the investment of learning. None of these transitions are as daunting as they look from the outside, and every one of them pays back the time you spend on them within a few weeks of regular use.

Excel will always have a place. Just make sure it’s the right place.

About the Author James

James is a data science writer who has several years' experience in writing and technology. He helps others who are trying to break into the technology field like data science. If this is something you've been trying to do, you've come to the right place. You'll find resources to help you accomplish this.